{{item.title}}

{{item.text}}

{{item.text}}

This year we had a record number of respondents to our survey of which 33% of respondents represented organisations which had >10 billion USD in revenue, 20% of the respondents were from publicly listed organisations and 55% of the respondents belong to the tech and security function.

It’s no longer business-as-usual at your organisation. But most companies are still locked into cyber asusual, as the 2024 Global Digital Trust Insights survey shows. Fragmented initiatives. An ever-expanding array of technological complexities. A risk management programme that, with its gaps, is risky in itself. Transformations and projects that don’t produce the results you want. These stumbling blocks and others remain in the way of cybersecurity that’s truly trustworthy.

In the 2023 playbook, we identified critical challenges that C-suite executives should address together, as partners. These are still relevant.

Q10. To what extent is your organisation implementing or planning to implement the following cybersecurity initiatives?

Base: All respondents= 3876. Analysis technique utilised is factor analysis

Source: PwC, 2024 Global Digital Trust Insights.

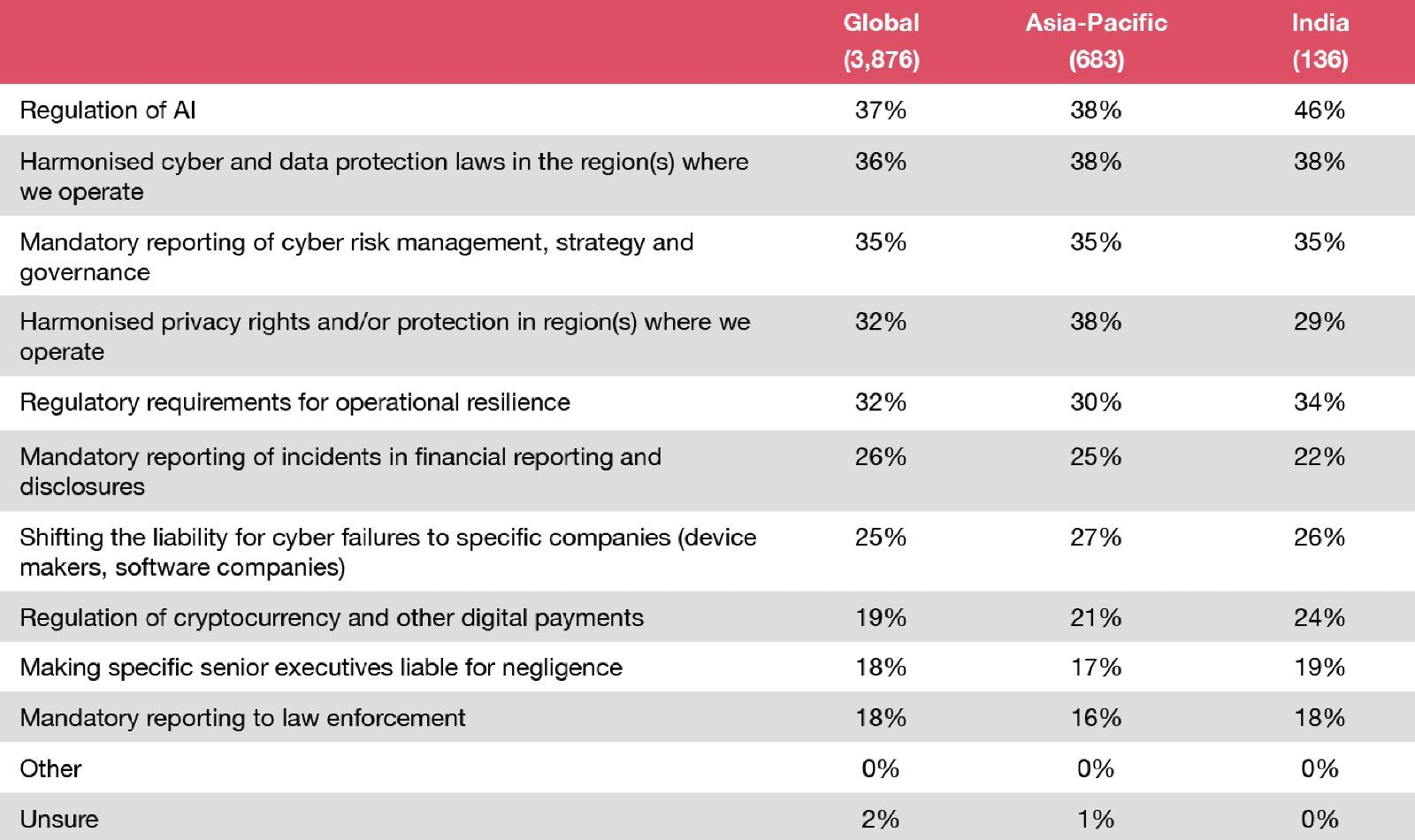

Our India respondents feel that regulation of AI, harmonised cyber and data protection laws, along with mandatory reporting of cyber risk management, strategy and governance will be most important for future growth. These aspects will not only provide a competitive advantage for organisations in India but will also help fuel innovation. When organisations know that they have assessed and addressed possible cyber risks, they feel more confident and empowered to explore and experiment.

Manu Dwivedi

Partner and Leader - Cybersecurity and Risk Consulting GCC, PwC India

For Indian organisations, state-level and sectoral regulations continue to steer systemic changes in cybersecurity and the adoption of principle-based ones seems to be more successful. But irrespective of compliance requirements, businesses are investing in building an all- encompassing cyber resilience programme.

Sundareshwar Krishnamurthy

Partner and Leader – Cybersecurity, PwC India

Speak a new language.

CISO

CFO

General Counsel

Placing yourself at the epicentere of innovation means meeting your leadership teams where they are and helping them to overcome the intimidation they might feel regarding what you do. Using insider terms such as cyber landscape, attack surface and even zero trust can only further mystify those outside your profession.

Dare to talk about cyber in business-speak, tech-speak, finance-speak or everyday-speak. Speak to your customers, investors and business partners in annual security reports in ways that inform and engage. Using common vocabularies can help executives wrestle with the trade-offs, tensions and chaos that inevitably happen at the epicentere of innovation

CISO

CRO

Internal audit

CCO

COO

Use more sophisticated approaches to cyber-risk modelling such as scanning for threats using formulas specific to your company’s sector, vision and strategy. Create a risk-linked performance incentive for every bonus-eligible employee in the company, to build a risk culture. Invent new ways to find and strengthen your weaknesses, perhaps with a modern bug bounty programme that incentivises independent security research. Finally, procure and begin using a cloudfirst, centrally managed identity solution to secure your business expansion goals.

CISO

CIO

General Counsel

Regulatory Affairs

Speak the language of trust, not just regulatory compliance. Involve yourself early and often for a better chance at influencing any new rules and ensuring that they boost, not hinder, business success. AI, the metaverse, cryptocurrency, privacy - the regulatory topics could well benefit from your experience and insights. Remember, regulators can feel as befuddled as anyone by the workings of cyber and tech.

CISO

CIO

CTO

CRO

COO

Providing you with round-the-clock eyes is one benefit of automation, GenAI and managed services. Performing mundane chores so your teams don’t have to is another.

Liberated from the tyranny of tedious tasks, your people may find time and space to ponder new cyber threats and create new ways to thwart evolving threats.

CISO

Board

CEO

Cyber tops the risk register in most companies and on many executive surveys. But is it a staple topic in your boardroom? Are you getting quality information not only on cyber risks and controls, but also on how major strategic initiatives are furthering business and revenue growth? Security provides the underpinnings for everything the organisation does: finance, development, personnel, technology and other areas of the business you likely discuss every time you meet.

Looking your cyber programme squarely in the eye can be a daring move.

CISO

CEO

Business transformation is one thing. Cyber transformation is not another. They are the same. The CISO and CEO together need to embrace cyber as a whole-of-business endeavour, putting themselves in the business owner’s shoes. Wouldn’t they want every aspect – financial records, proprietary research, application development, customer data and the like - protected from unauthorised viewing or use? Wouldn’t they want to safeguard their brand? Couldn’t cybersecurity spur innovations that save money and help the business to grow? This is the raison d’être of cyber.

More than two-thirds say they’ll use GenAI for cyber defence in the next 12 months.

Nearly half are already using it for cyber risk detection and mitigation.

One-fifth are already seeing benefits to their cyber programmes because of GenAI — mere months after its public debut.

Q7. To what extent do you agree or disagree with the following statements about Generative AI? Q10. To what extent is your organisation implementing or planning to implement the following cybersecurity initiatives?

Base: All respondents=3876

Source: PwC, 2024 Global Digital Trust Insights.

GenAI comes at an opportune time in cybersecurity.

For defence. Organisations have long been overwhelmed by the sheer number and complexity of human-led cyberattacks, both of which continually increase. And GenAI is making it easier to conduct complex cyber attacks at scale. Researchers found a 135% increase in novel social engineering attacks in just one month, from January to February 2023. Services like WormGPT and FraudGPT are enabling credential phishing and highly personalised business email compromise.

To secure innovation. Businesses eager to reap GenAI’s many potential benefits to develop new lines of business and increase employee productivity invite serious risks to privacy, cybersecurity, regulatory compliance, third-party relationships, legal obligations and intellectual property. So to get the most benefit from this groundbreaking technology, organisations should manage the wide array of risks it poses in a way that considers the business as a whole.

From reconnaissance to action, GenAI can be useful for defence all along the cyber kill chain. Here are the three most promising areas.

Threat detection and analysis. GenAI can be invaluable for proactively detecting vulnerability exploits, rapidly assessing their extent — what’s at risk, what’s already compromised and what the damages are — and presenting tried-and-true options for defence and remediation. GenAI can identify patterns, anomalies and indicators of compromise that elude traditional signature-based detection systems.

GenAI is strong at synthesising voluminous data on a cyber incident from multiple systems and sources to help teams understand what has happened. It can present complex threats in easy-to-understand language, advise on mitigation strategies and help with searches and investigations.

Cyber risk and incident reporting. GenAI also promises to make cyber risk and incident reporting much simpler. Vendors already are working on this capability. With the help of natural language processing (NLP), GenAI can turn technical data into concise content that non-technical people can understand. It can help with incident response reporting, threat intelligence, risk assessments, audits and regulatory compliance. And it can present its recommendations in terms that anyone can understand, even translating confounding graphs into simple text. GenAI could also be trained to create templates for comparisons to industry standards and leading practices.

GenAI’s reporting capabilities should prove invaluable in this new era of heightened cyber transparency. To wit: A recent law will soon require critical infrastructure entities in the US to report cyber incidents. Also, the Securities and Exchange Commission (SEC) has released rules requiring disclosures of material cyber incidents and material cyber risks in SEC filings. The European Union’s Digital Operational Resilience Act calls for timely and consistent reporting of incidents that affect financial entities’ information and communication technologies. Imagine having a tool that makes preparing these reports much easier.

Adaptive controls. Securing the cloud and software supply chain requires constant updates in security policies and controls — a daunting task today. Machine learning algorithms and GenAI tools could soon recommend, assess and draft security policies that are tailored to an organisation's threat profile, technologies and business objectives. These tools could test and confirm that policies are holistic throughout the IT environment. Within a zero trust environment, GenAI can automate and continually assess and assign risk scores for endpoints, and review access requests and permissions. An adaptive approach, powered by GenAI tools, can help organisations better respond to evolving threats and stay secure.

And more. Many vendors are pushing the limits of GenAI, testing what’s possible. As the technology improves and matures, we’ll see many more uses for it in cyber defence. It could be some time, however, before we see “defenceGPT’s” broad-scale use.

GenAI tools could help relieve the acute cyber talent shortage. Attrition is a growing problem for 39% of CISOs, CIOs and CTOs, according to our 2023 Global DTI survey. It’s hindering progress on cyber goals for another 15%.

Once GenAI frees security professionals from routine and mundane tasks such as detection and analysis, they may turn their focus to understanding — not just knowing — the causes of breaches and how best to respond to them. They can be better positioned to make fast decisions and take swift actions. They might cultivate true “deep learning” — in a human sense — of LLMs, and use them to invent new ways to secure the enterprise.

And they’ll be well equipped to pivot from finding answers — GenAI’s purview, now — to asking more meaningful questions not only of their AI models but also of one another, sparking imagination and insights that are truly new. You can help your security teams develop traits that AI won’t learn or automate, such as curiosity, empathy and intuition.

The use of GenAI for cyber defence — just like the use of GenAI across the business — will be affected by AI regulations, particularly concerning bias, discrimination, misinformation and unethical uses. Recent directives including the Blueprint for AI Bill of Rights from the White House and the draft European Union AI Act emphasise ethical AI. Policymakers around the world are scrambling to set limits and increase accountability — treating generative AI with urgency because of its potential for affecting broad swathes of society profoundly and rapidly.

Savvy enterprises will want to get ahead of AI mandates. Our respondents are well aware of their imminence: they’ve told us that AI regulations, more than any other, could significantly affect their future revenue growth.

Among the 37% of respondents anticipating AI regulation, three-quarters think the costs of compliance will also be significant. About two-fifths say they’ll need to make major changes in the business to comply.

Amid regulatory uncertainty, companies can control one thing: how they deploy GenAI in a responsible way in their environments, which can position themselves for compliance. Seven major developers of LLMs are showing the way. At the heart of a voluntary pledge they recently signed with the US government is an agreement to start placing guardrails around the technology’s capabilities.

Enthusiasm for AI is so high that 63% of our executive respondents said they’d personally feel comfortable launching GenAI tools in the workplace without any internal controls for data quality and governance. Senior execs in the business are even more so inclined (74%) than the tech and security execs.

However, without governance, adoption of GenAI tools opens organisations to privacy risks and more. What if someone includes proprietary information in a GenAI prompt? And without training in how to properly evaluate outputs, people might base recommendations on invented data or biased prompts.

Employees also need to be on guard against prompt injection risks, which Open Source Foundation for Application Security (OWASP) highlighted as the top security risk related to using LLMs. Prompt injections, also called jailbreaks, refer to prompts designed to elicit unintended responses by LLMs by overwriting system prompts or manipulating inputs from external sources.

The place to start with GenAI — as with almost any technology — is by laying the foundation for trust in its design, its function and its outputs. This foundation begins with governance, but concentrating on data governance and security concerns is especially important. The lion’s share of respondents overall say they intend to use GenAI in an ethical and responsible way: 77% agree with this statement.

GenAI tools will be able to quickly synthesise information from multiple sources to aid in human decision-making. And, given that 74% of breaches reportedly involve humans, governance of AI for defence ought to include a human element as well.

Enterprises would do well to adopt a responsible AI toolkit, such as PwC’s, to guide the organisation’s trusted, ethical use of AI. Although it’s often considered a function of technology, human supervision and intervention are also essential to AI’s highest and ideal uses.

Ultimately, the promise of generative AI rests with people. Every savvy user can — should — be a steward of trust. Invest in them to know the risks of using the technology as assistant, co-pilot or tutor. Encourage them to critically evaluate the outputs of generative AI models in line with your enterprise risk guardrails. Rally security professionals to follow responsible AI principles.

Select a country or region from the list to explore local insights

The 2024 Global Digital Trust Insights is a survey of 3,876 business, technology and security executives (CEOs, corporate directors, CFOs, CISOs, CIOs and C-Suite officers) conducted in May through July 2023.

Four out of ten executives are in large companies with $USD 5 billion or more in revenues. Importantly, 30% are in companies with $USD 10 billion or more in revenues.

Respondents operate in a range of industries, including industrial manufacturing (20%), financial services (20%), tech, media, and telecom (19%), retail and consumer markets (17%), energy, utilities, and resources (11%), health (9%) and government and public services (3%).

Respondents are based in 71 countries. The regional breakdown is Western Europe (32%), North America (28%), Asia Pacific (18%), Latin America (10%), Eastern Europe (5%), Africa (4%) and the Middle East (3%).

The Global Digital Trust Insights Survey was formerly known as the Global State of Information Security Survey (GSISS). In its 26th year, it’s the longest-running annual survey on cybersecurity trends. It’s also the largest survey in the cybersecurity industry and the only one that draws participation from senior business executives, not just security and technology executives.

PwC Research, PwC’s global Centre of Excellence for market research and insight, conducted this survey.

{{item.text}}

{{item.text}}